LLM hallucinations are the feature, not the issue. There has been a lot of talk about LLM...

Composable Prompts is more than an LLM application development framework

The comprehensive platform enables enterprise teams to design, test, deploy and operate LLM-powered tasks to drive efficiency, improve performance, and lower costs.

Compose powerful prompts, and reuse them across your applications

Reuse tested prompts and compose them to create more complex versions. In addition, prompts come with schemas in and out to strengthen quality — thanks to type safety.

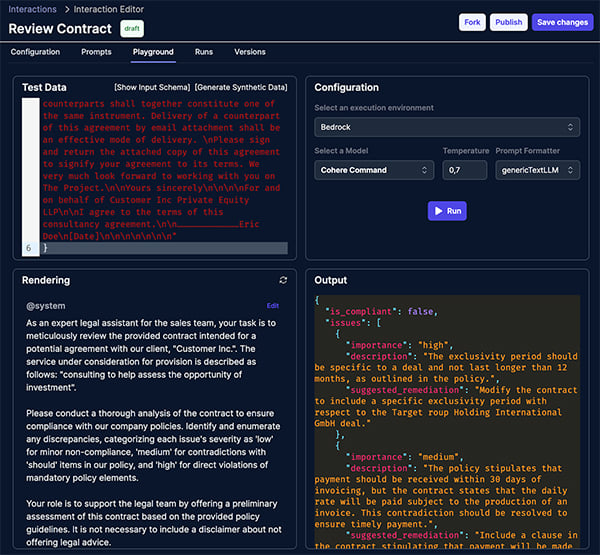

Leverage multiple AI models and environments

Easily test prompts in different environments including OpenAI, AWS Bedrock, Replicate, Groq, Google Vertex AI, Hugging Face, and Together AI. Use different models for different use cases. Prompts are automatically converted to the target's model format without any change. Want to assign a task to Llama2 instead of GPT4 in 30% of cases? No problem.

Optimize performance and cost while keeping the data fresh

You can design an intelligent cache strategy for each interaction, so the result can be reused, varied on a set of keys, or used only in a certain percentage of calls.

Easily Design LLM Prompts & Tasks

Governance

End-to-end governance of LLM agents and LLM-powered tasks. Know which task is deployed, which application uses it, what it does, and what data has been sent & received.

Security

Dramatically reduce the attack surface. Fine-grained security, keys restricted to tasks, short-lived restricted public keys, audit history with advanced search in runs, automated key rotation, etc.

Orchestration

Execute tasks on any inference provider and model through easy to use API endpoints. Use and reuse results, thanks to persistence, indexing, and search.

Test Multiple Models & Safely Deploy in Production

Virtualization

Create Synthetic LLM by mixing several LLMs, and choosing the appropriate distribution strategy: weighted load balancing, multi-head execution, mediator, or intelligent routing.

Analytics

Follow model performance, visualize result quality, follow speed, latency, and quality.

Monitoring

Capture each run - including input & results, monitor availability and performance.

Efficiently Operate LLM-Powered Tasks

Integrated with Leading Generative AI Model Providers

OpenAI

Amazon Bedrock

Google Vertex AI

Groq

Replicate

TogetherAI

Anthropic

MistralAI

AI21 Labs

Hugging Face

Augment Applications & Workflows

With Composable Prompt's design studio, advanced LLM virtualization layer, and orchestration engine, enterprise organizations can leverage LLM technology for a variety of use cases.

Information Extraction

Extract structured data from text content to use in or update systems

Training / Testing

Generate unique tests and correct them based on reference information to generate in-context training

Large Content Generation

Generate large documents like contracts

Document Review

Review documents based on a set of rules and then highlight the issues

Code Assistance

Help generate code for developers and technical users

Content Copilot

Generate or propose text as the user creates content in-context

Latest Blogs

In recent years, Large Language Models (LLMs) have emerged as a powerful tool in digital...

Composable Prompts Secures $4 Million in Seed Funding to Spearhead Enterprise Adoption of Large...